I haven’t posted a new blog in quite a while, but a Facebook conversation today made me think it might be a good time to repost my first blog on this site, from June 4, 2020. I do in the hope that in may be of some value in these increasingly dark times.

Haiku for the Queen of the Night’s Bloom (July 19, 2024)

The Epiphyllum oxypetalum, commonly known as the Queen of the Night, is a night-blooming tropical epiphyte, an organism that grows on the surface of another plant and derives its moisture and nutrients from the air, rain, water (in marine environments), or from debris accumulating around it. It has huge, showy, and very fragrant white flowers that open only at night.

In June 2019 we rented an older beach house near the Mantanzas Inlet and in its beautiful gardens we discovered a large mature Queen of the Night nestled in the boots of a sable palm. Heeding the call of plant propagation, Susan took a clipping.

A few days ago, Susan realized that her Queen was getting ready to bloom. Last night, following an afternoon thunderstorm, its four flowers opened. Here’s a few photos and a set of haiku inspired by a magical night. (Disclaimer: While everything related below occurred last night, I invoke artistic license to include a few earlier photos).

I .

The tree frogs at dusk

Fearful of the night alone,

Ply their songs of love.

II.

A soft breeze rustles

Through the branches of the pine,

Kissed by full moon’s light.

III.

Another year past.

Our shy Queen welcomes this night

The mid-summer moon.

IV.

The Queen of the Night

Invites the moth’s soft caress,

Petals open wide.

V.

Distant lightning flash.

Cicada tymbals vibrate

Through the sultry air.

VI.

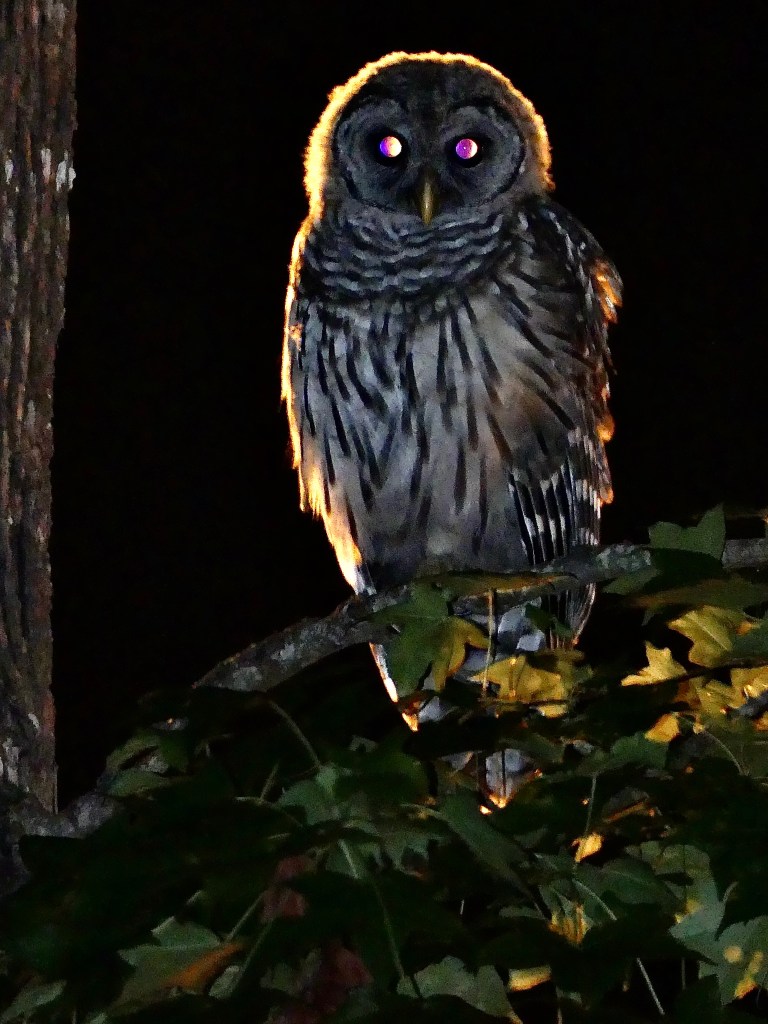

A cracker barred owl,

Obscure in the live oak, asks,

“Who cooks for you ’all?”

VII.

Early morning light.

The Queen nestles in her robes

And folds into sleep.

Our nation’s war on the young (June 28, 2022)

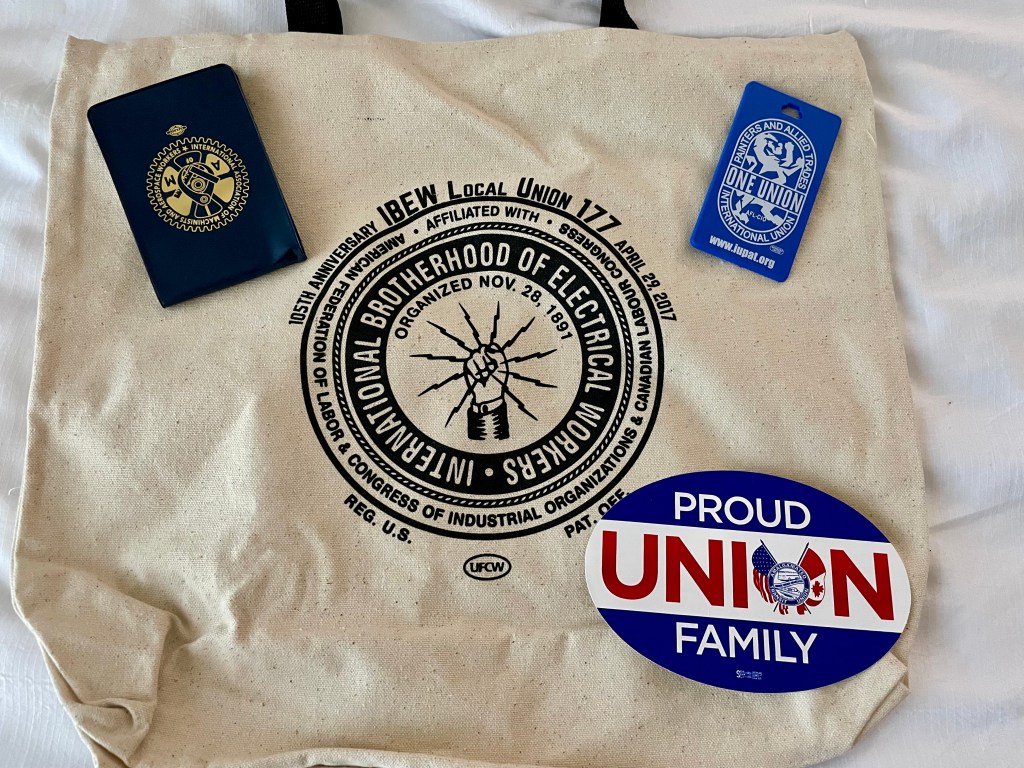

Here’s a dispatch from the site of Florida’s 2022 AFL-CIO COPE and Biennial Convention, where Susan served as one of this year’s AFT delegates.

I began writing this post from a hotel room just outside Disney Springs in Orlando and in the now notorious Reedy Creek Improvement District. (If you aren’t familiar with what’s happened in the last three months regarding Disney and Reedy Creek and its conflicts with Florida’s governor, the Wikipedia page gives a good summary, with links to earlier stories).

It was here, surrounded by dedicated activists from our nation’s great tradition of organized labor struggle, that I learned of the new revelations in Thursday’s testimony at the January 6 Congressional hearings and the Supreme Court’s decisions to both strike down New York state laws regulating the carrying of concealed weapons— as a CNN report notes, “law enforcement officers have told us time and again that allowing more guns into public places will make their jobs harder and more dangerous, but that’s exactly what that ruling does”—and reverse nearly a half-century of legal precedent and progress in fundamental human rights with the long expected overturning of Roe v. Wade. (I talked about the anti-life views of so many anti-choice and anti-abortion conservatives in a prior post, June 19, 2020. The same point is now highlighted on the NARAL website.)

Margaret Huang, president and CEO of the Southern Poverty Law Center, concisely summarizes the latter ruling in this way: “The decision to overturn Roe v. Wade is the culmination of a powerful, concerted movement to ensure that politicians control women’s bodies. Today’s decision flies in the face of the global progress to expand human rights protections for all people. While other countries have embraced the opportunity to expand human rights protections, the United States is a growing outlier with its efforts to deny basic human rights to more than half of the country’s population.”

To the list of those identified by Huang for whom this ruling will have especially “serious, long-term consequences”—including women, members of the LGBTQ+ community, those residing in the Deep South, and people living in poverty—I would add the community of the young, all those just commencing on or in the earliest days of their journey in the world. These rulings represent the latest front of an increasingly authoritarian, anti-democratic, and nihilistic assault on our nation’s democratic values by a militant gerontocracy—“government based on rule by old people”—and their enablers. A few years ago, the self-proclaimed “sexagenarian” Timothy Noah archly observed, “Somewhere along the way, a once-new nation conceived in liberty and dedicated to the proposition that all men are created equal (not men and women; that came later) became a wheezy gerontocracy. Our leaders, our electorate and our hallowed system of government itself are extremely old.” By 2022 it has become clear, we need to change this state of affairs and change it soon.

Disney is by no means a bastion of progressivism. This is especially the case in its treatment of the workers in its far flung theme park empire: “Disneyland employees report high instances of homelessness, food insecurity, ever-shifting work schedules, extra-long commutes, and low wages. While there is national attention on the minimum wage with successful local efforts to raise the minimum wage to $15 an hour, more than 85% of union workers at Disneyland earn less than $15 an hour.” (For a more detailed study of these issues, I’d also recommend Andrew Ross’s latest, Sunset Blues: The Failure of American Housing [2021]. Andrew generously came to visit UF last spring to talk about both his book and the current student debt crisis.)

However, in a sign of the times in which we live, and especially after recent legislation in Florida aimed at restricting free speech and academic freedom in our schools and universities (for a bit more on the roots of these changes, see my post from November 8, 2021), Disney World now feels like one of the more open and welcoming places remaining in our state.

Moreover, in a move that has further enflamed some of Disney’s far-right critics, in its recent Marvel universe blockbuster, Dr. Strange in the Multiverse of Madness— which we watched shortly after our return from Orlando— the character America Chavez (played by Xochitl Gomez) sports a flash jean-jacket with a progress pride pin near her left shoulder, a move praised by Sasha Misra of Stonewall: “You can’t be what you can’t see, and this sign of acceptance and inclusion will mean so much to LGBTQ+ people around the globe.” It turns out the character comes from an anti-Trump-verse where she is raised by two mothers and speaks Spanish as her first language. This resulted in the film being banned in Saudi Arabia, leading Dr. Strange himself (Benedict Cumberbatch) to comment: “We’ve come to know from those repressive regimes that their lack of tolerance is exclusionary to people who deserve to be not only included but celebrated for who they are, and made to feel a part of a society and a culture and not punished for their sexuality.”

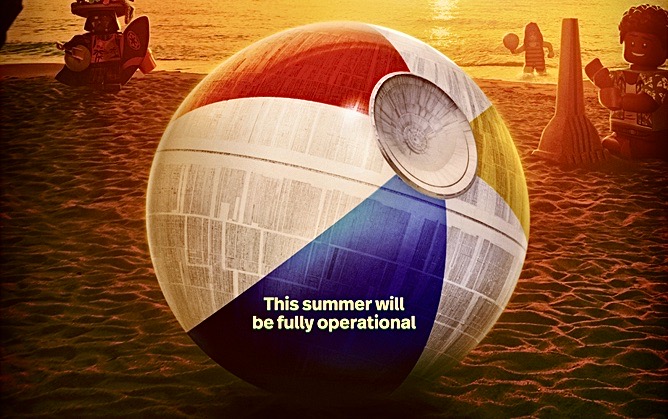

This will not long remain the case, however, if Ron DeSantis, Florida’s current governor and one of the leading contenders for the 2024 Republican nominee for President, has his way. DeSantis’s repressive regime has in recent months worked very hard to make Florida more like Saudi Arabia, signing into law the “Don’t Say Gay” bill, which bans public school teachers from initiating classroom discussion concerning sexual orientation or gender identity (“During a press conference ahead of signing the law, DeSantis said teaching kindergarten-aged kids that ‘they can be whatever they want to be’ was ‘inappropriate’ for children'”); removing dozens of math textbooks from classrooms; passing laws to prohibit whatever they determine to be “woke ideology”; and even threatening to block performance funding to universities if they don’t “comply with the state’s new instructional guidelines outlined in the ‘Stop Woke Act’.” (In an email message accompanying the link to a video designed to explain the new laws to its faculty, the University of Florida’s current Provost noted that “It declares certain types of instruction to be ‘discriminatory’ as a matter of state law.”) All of this leads me to suspect that the recent Disney ad I included at the opening of my post could be read as a subtle nod to the twitter tag first attached to him in the early days of the Covid-19 pandemic, #DeathSantis. After all, DeSantis is now very much a fully operational (Death) “Star” of the Party. And his menace is just as great as that of his predecessor and one-time mentor Trump.

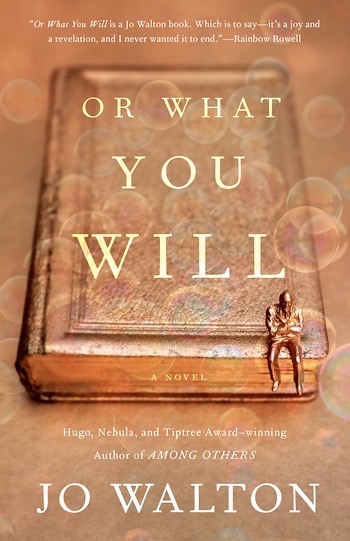

Shortly before our departure for Orlando, I had the honor to present a YouTube keynote talk at the “Conference of Science Fiction, Utopias, and Dystopias: Relationships in Crisis,” organized by the Laboratory of Studies of the Novel (LERo) at the University of São Paulo, Brazil. My paper is entitled “A Future Worthy of Her Spirit: Parents, Children, and the Neoliberal University in Kazuo Ishiguro’s Klara and the Sun.” (For a recording of the full talk, you can go to this site.) I ended my presentation with these words (all you need to know here is that Rick and Paul are two central characters in Klara and the Sun):

Let me conclude with Paul’s brief observation concerning Rick: “Rick’s turned into a fine young man. I hope he’s able to find a path through the mess we’ve bequeathed to his generation” (232). These sentiments apply equally to Ishiguro’s Josie, and to my children Nadia and Owen, and indeed so many of the students I, and I hope many of you, have had the great privilege to encounter in recent years. This then may be the most important lesson of Klara and the Sun for those of us who have bequeathed to their generation such a mess of the planet: the time is long since passed for us “normal kids” to stop telling a younger generation how they should be, and instead begin to listen to their ideas of how we all together could be.

As so many commentators have noted in various ways, the events of late last week have shaken our democracy to its very core— and if Clarence Thomas gets his wish, state legislatures will soon be empowered to strip away any rights that challenge his deeply patriarchal and intolerant version of Christianity. One reporter in the state whose senior senator is Mitch McConnell—who as much as anyone is the mastermind of what Jennifer Rubin refers to as the current court’s “war on modern America”—points out, “Theocracy may be too weak a word to describe what’s coming down the pike.”

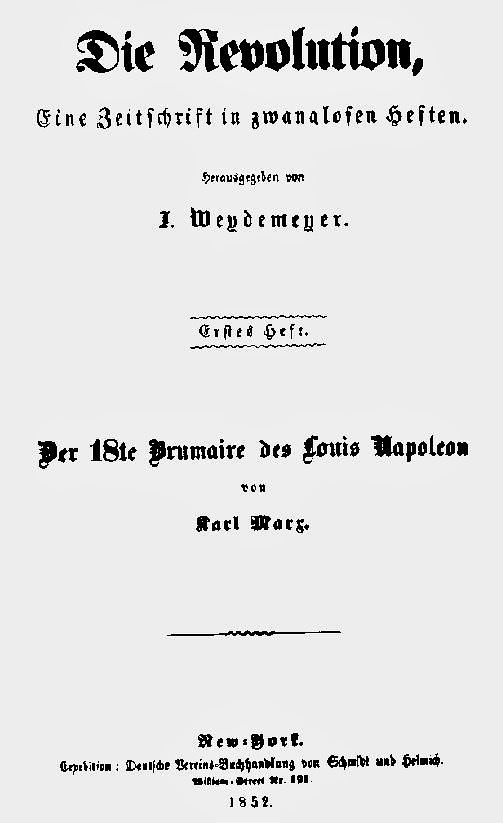

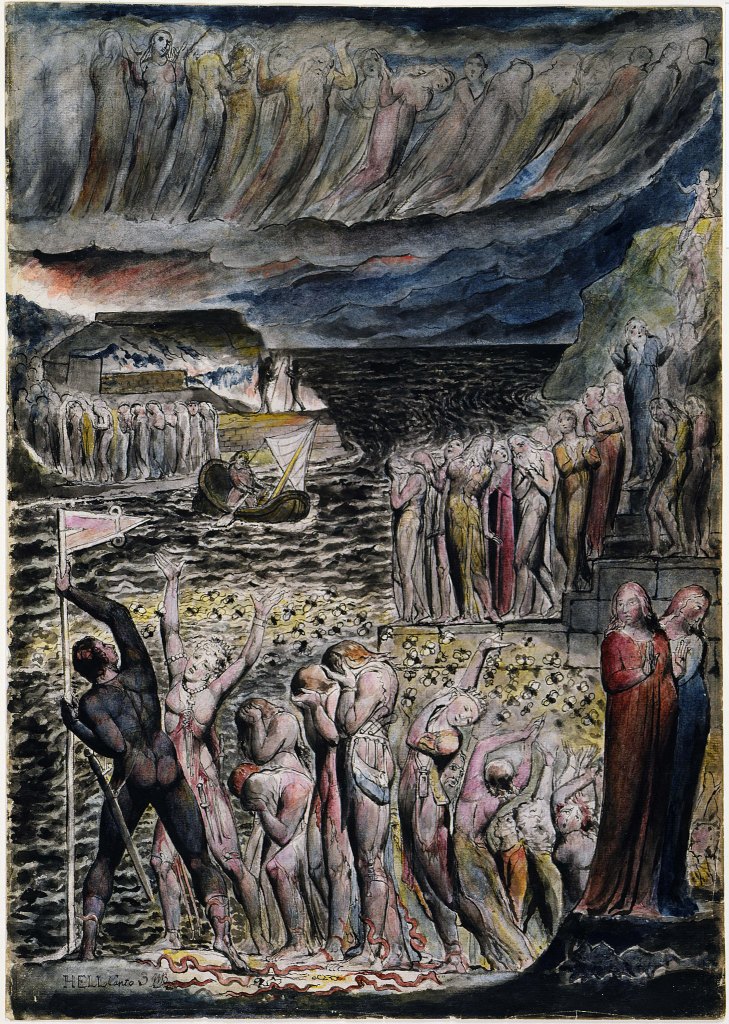

All of this brought to mind one of the most celebrated passages in Marx’s writings, from the opening chapter of the 18th Brumaire of Napoleon Bonaparte (1852):

Bourgeois revolutions, like those of the eighteenth century, storm more swiftly from success to success, their dramatic effects outdo each other, men and things seem set in sparkling diamonds, ecstasy is the order of the day—but they are short-lived, soon they have reached their zenith, and a long Katzenjammer [confusion] takes hold of society before it learns to assimilate the results of its storm-and-stress period soberly. On the other hand, proletarian revolutions, like those of the nineteenth century, constantly criticize themselves, constantly interrupt themselves in their own course, return to the apparently accomplished, in order to begin anew; they deride with cruel thoroughness the half-measures, weaknesses, and paltriness of their first attempts, seem to throw down their opponents only so the latter may draw new strength from the earth and rise before them again more gigantic than ever, recoil constantly from the indefinite colossalness of their own goals – until a situation is created which makes all turning back impossible, and the conditions themselves call out:

Hic Rhodus, hic salta!

Here is the Rose, here dance!

As you may recognize, Marx’s final lines refer to an equally well-known passage from the Preface to Hegel’s Philosophy of Right (1820):

This treatise, in so far as it contains a political science, is nothing more than an attempt to conceive of and present the state as in itself rational. As a philosophic writing, it must be on its guard against constructing a state as it ought to be. Philosophy cannot teach the state what it should be, but only how it, the ethical universe, is to be known.

Ιδού Ποδός, ιδού και το πήδημα

Hic Rhodus, hic saltus.

To apprehend what is is the task of philosophy, because what is is reason. As for the individual, every one is a son of his time; so philosophy also is its time apprehended in thoughts. It is just as foolish to fancy that any philosophy can transcend its present world, as that an individual could leap out of his time or jump over Rhodes. If a theory transgresses its time, and builds up a world as it ought to be, it has an existence merely in the unstable element of opinion, which gives room to every wandering fancy.

With little change the above saying would read:

Here is the rose, here dance

The barrier which stands between reason, as self-conscious Spirit, and reason as present reality, and does not permit spirit to find satisfaction in reality, is some abstraction, which is not free to be conceived. To recognize reason as the rose in the cross of the present, and to find delight in it, is a rational insight which implies reconciliation with reality. This reconciliation philosophy grants to those who have felt the inward demand to conceive clearly, to preserve subjective freedom while present in substantive reality, and yet thought possessing this freedom to stand not upon the particular and contingent, but upon what is and self-completed.

While concurring with Hegel for much of what he has to say here, Marx parts company with Hegel’s call for a “reconciliation with reality.” A note in the indispensable online Encyclopedia of Marxism suggests that Marx’s citation prefigures his later “maxim in the Preface to the Critique of Political Economy (1859): ‘Mankind thus inevitably sets itself only such tasks as it is able to solve, since closer examination will always show that the problem itself arises only when the material conditions for its solution are already present or at least in the course of formation!’”

The note then continues:

So Marx evidently supports Hegel’s advice that we should not “teach the world what it ought to be,” but he is giving a more active spin than Hegel would when he closes the Preface observing:

For such a purpose philosophy at least always comes too late. Philosophy, as the thought of the world, does not appear until reality has completed its formative process, and made itself ready. History thus corroborates the teaching of the conception that only in the maturity of reality does the ideal appear as counterpart to the real, apprehends the real world in its substance, and shapes it into an intellectual kingdom. When philosophy paints its grey in grey, one form of life has become old, and by means of grey it cannot be rejuvenated, but only known.

The owl of Minerva, takes its flight only when the shades of night are gathering.

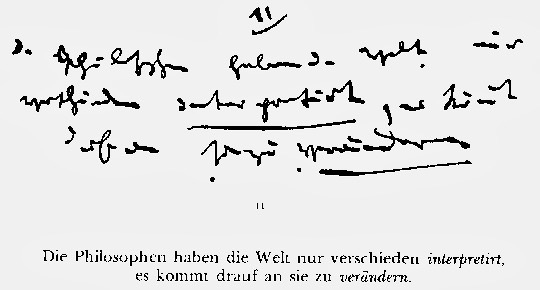

The opposition Marx sets up in this passage is already beautifully articulated in his early „Die Thesen über Feuerbach“ (1844). There Marx writes, „Die Philosophen haben die Welt nur verschieden interpretiert; es kommt darauf an, sie zu verändern.“ (The philosophers have only interpreted the world in various ways; the point, however, is to change it.”) Crucially, Marx also always stresses that such radical change should not be dictated by these philosophers; rather philosophers—read in our case, intellectuals, teachers, and even bloggers—should take their lead from those most endangered by the world that is. For us living today that would very much include all those whom Brecht names die Nachgeborenen, those who are born after us, or the generations to whom we have bequeathed such a mess.

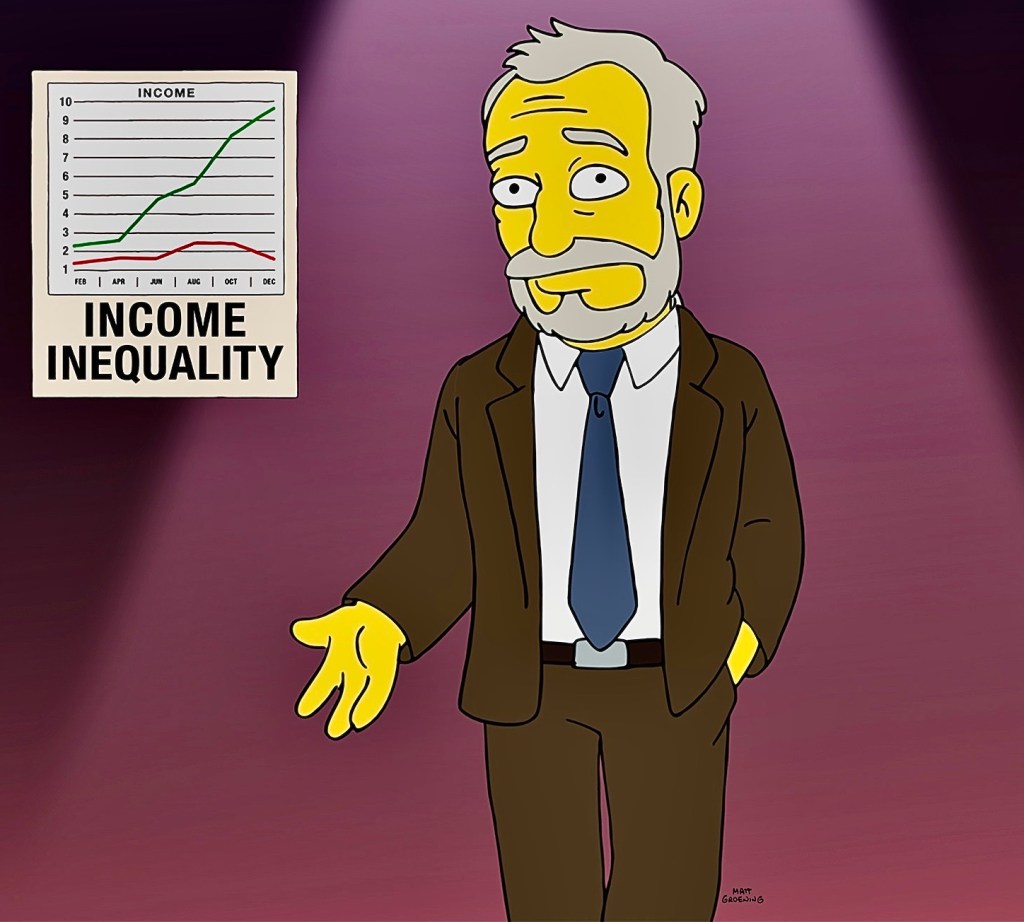

How we arrived in this intolerable situation is brilliantly summarized in the unlikely place of last season’s finale of The Simpsons, which first aired May 22, 2022. In response to the episode some far-right commentators have called for a further boycott of Disney (not very effective if my observations of the last few days are any indicator), purportedly to teach them “that re-writing history to score political points isn’t acceptable.” The ironies in such assertions, especially in light of the January 6 hearings, are rich indeed. The Simpsons episode is entitled “Poorhouse Rock,” and features guest appearances by Hugh Jackman as the singing janitor-narrator and none other than former Secretary of Labor, Professor Robert Reich.

The episode very well could have been written by Bertolt Brecht and Kurt Weil:

Wer seine Habsucht zeigt,

Um den wird ein Bogen gemacht.

Mit Fingern zeigt man auf ihn,

Dessen Geiz ohne Maßen ist!

Wenn die eine Hand nimmt,

Muß die andere geben;

Nehmen für geben, so muß es heißen, Pfund für Pfund!

So heißt das Gesetz!

(To those who show their greed,

People give a wide berth.

With unfriendly fingers they point at the one,

Whose greed is beyond measure!

What one hand takes,

The other must give;

In the measure you give, it will be given to you, pound for pound!

That’s the law!)

Or, perhaps more appropriately in the US context, Marc Blitzstein:

That’s thunder

That’s lightning

And it’s going to surround you

No wonder

Those storm birds

Seems to circle around you

Well you can’t climb down and you can’t say no

You can’t stop the weather

Not with all your dough

For when the wind blows

And when the wind blows

The cradle will rock

In another wonderful convergence, in Tim Robbins’s film The Cradle Will Rock (1999), based in part on the events surrounding the June 16, 1937 premiere of Blitzstein’s musical, the role of Blitzstein is played by long-time Simpsons voice actor Hank Azaria. (I’ve written about the film in an essay published in the collection Literary Materialisms [2013]edited by Mathias Nilges and Emilio Sauri.)

For those of you who missed it and don’t have access to the full Simpsons episode, I’ve provided links in three parts to its extraordinary final song sequence entitled “Good Bye Middle Class.” Part 1, in which Reich makes his appearance, offers an intelligent and accessible thumbnail history of the post-war U.S. as well as the neo-conservative and neo-liberal assaults on the achievements of the Great Society. (For a valuable reading of the connections between the Supreme Court’s recent sledge-hammer blows to democracy and the long wave of transformations in U.S. life that began in 1981, see Heather Cox Richardson’s June 24, 2022 “Letter from An American.”) Part 2 provides a short bridge, where Bart tries to imagine paths out of his, and his generation’s, predicament (the young today “recoil constantly from the indefinite colossalness of their own goals”).

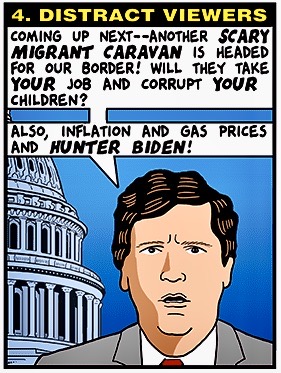

Part 3 brings it all home by exposing the ways the right-wing media’s blatant lies (re-writing history to score political points is never acceptable) fuels the growing paranoia and nihilism of the gerontocracy, whose votes played an important role in bringing about the current mess: “The average age of Members of the House at the beginning of the 117thCongress was 58.4 years; of Senators 64.3 years.” Indeed, Clarence Thomas and Samuel Alito would have fit right into the crowd pictured near the song’s conclusion.

As the episode makes clear, neither of the possible solutions taken up by Bart—to literally “burn it down” or to be among the “last men standing” in the middle class as the planet continues to burn for everyone else— will solve the colossal challenges of the present moment. But what this episode, as well as the events of the past few days, so powerfully remind us is that we once again stand at a crossroads in the nation’s and along with it the planet’s, history. It’s here then that we must dance. The storm birds are circling and the wind blows more fiercely every day. In the end, “What you give out will come back to you—full circle” (Luke 6:38, First Nations Version).

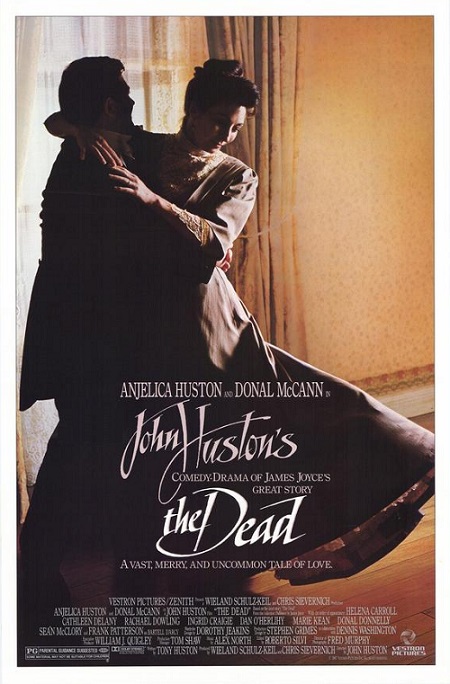

Happy birthday James Joyce (February 2, 2022)

Today is the 140th birthday of James Joyce (1882-1941) and making this one extra special it is the centenary of the publication of Ulysses.

In honor of the occasion, here’s a poem I wrote late in the fall of 2020 and which appeared last spring in the final print issue of the much beloved James Joyce Literary Supplement 35, no. 1 (Spring 2021). It is very special to me and I hope you enjoy it.

A River Runs Back to its Beginning;

Or, Wake Again Finn

A non-reading of James Joyce, by Phillip E. Wegner

—For my students.

Winter solstice,

in dark times, 2020.

He rests. He has travelled.

I.

O.

Hail and farewell.

Vanity of vanities.

We’re going downhill fast.

The leaves have all fallen,

The pages of my book drift from me.

Only one remains.

I’ll bear it on me to remind me of life!

II.

So soft this morning hour,

Faintly falling.

Soft morning, city!

Yes.

I said yes.

I will.

Yes.

III.

“Carry me along, daddy,

Like you done through the toy fair.”

Who is that lankylooking galoot?

If I’d seen him coming for me now

Under billowing white sails

Like he was a Norseman,

Or Michael the Archangel,

I’d sink down at his feet,

humbly and silently,

As after a great fall,

Only to wake, still broken, alone.

IV.

Yes, the tides have changed.

It is time.

There’s where we first made love.

I put the rose in my hair

Like the Andalusian girls.

But you could not have a green rose.

But perhaps somewhere?

We pass through the grass

Without a sound.

V.

Hush!

Dawn breaks.

A call from afar,

Father calls.

Coming, father!

How long?

Have we gone so far already?

VI.

Here Comes Everybody,

They come from far and near.

The two of us then,

Us and them,

The end is here.

Time to begin.

Again!

Can you?

Wide-awake and laughing-like.

Take two.

VII.

But softly, remember me,

Until a thousand years have passed,

Until the end of time.

Your lips are the keys to my heart,

And they have been given to me.

Tell me the word, mother,

If you know now.

I will walk, I will talk;

I will walk and talk with thee.

VIII.

Away I go,

A way I know,

Alone

At last,

A Lasting Peace,

A loved I loved,

A long time,

A long way,

Along the

•

Old father, old artificer

Stand me

Now and ever

Night!

Notes

Title:

riverrun, past Eve and Adam’s, from swerve of shore to bend of bay, brings us by a commodius vicus of recirculation back to Howth Castle and Environs.

Finnegans Wake I.1, pg. 3

Title:

Finn, again!

Finnegans Wake IV, pg. 628

Subtitle:

Reading is first and foremost non-reading.

Pierre Bayard, How to Talk About Books You Haven’t Read, Ch. 1

Epigraph:

He rests. He has travelled.

Ulysses, “Ithaca,” pg. 606

Line 1:

O

tell me all about

Anna Livia! I want to hear all

Finnegans Wake I.8, pg. 196

Verses 1-8:

So. Avelaval. My leaves have drifted from me. All. But one clings still. I’ll bear it on me. To remind me of. Lff! So soft morning this morning ours. Yes. Carry me along, taddy, like you done through the toy fair. If I seen him bearing down on me now under whitespread wings like he’d come from Arkangels, I sink I’d die down over his feet, humbly dumbly, only to washup. Yes, tid. There’s where. First. We pass though the grass behush the bush to. Whish! A gull. Gulls. Far calls. Coming, far! End here. Us then. Finn, again! Take. Bussoftlhee, mememormee! Til thousendsthee. Lps. The keys to. Given! A way a lone a last a loved a long the

Finnegans Wake IV, pg. 628

Line 4:

According to Beckett, before leaving Paris Joyce had said—‘with something like satisfaction’— ‘We’re going downhill fast.’

Gordon Bowker, James Joyce, Ch. 34

Line 10:

faintly falling, like the descent of their last end, upon all the living and the dead.

Dubliners, “The Dead,” pg. 194

Line 11:

Soft morning, city!

Finnegans Wake IV, pg. 628

Lines 13-15:

yes I said yes I will Yes

Ulysses, “Penelope,” pg. 644

Line 18:

Who is that lankylooking galoot over there in the macintosh?

Ulysses, “Hades,” pg. 90

Lines 30-31:

I put the rose in my hair like the Andalusian girls used

Ulysses, Penelope, pg. 643

Lines 32-33:

But you could not have a green rose. But perhaps somewhere in the world you could.

A Portrait, Ch. I, pg. 9

Line 41:

—How long is Haines going to stay in this tower?

Ulysses, Telemachus, pg. 4

Line 43:

Here Comes Everybody.

Finnegans Wake I.2, pg. 32

Line 50:

Can you work the second for yourself?

Ulysses, Nestor, pg. 23

Line 51:

—Wide-awake and laughing-like to himself . . . .

Dubliners, “The Sisters,” pg. 9

Line 58-59:

Tell me the word, mother, if you know now. The word known to all men.

Ulysses, Circe, pg. 474

Lines 60-61:

I will walk, I will talk;

I will walk and talk with thee.

“The Keys of Heaven”

Line 71:

•

Ulysses, “Ithaca,” pg. 607

Lines 73-75:

Old father, old artificer, stand me now and ever in good stead.

A Portrait of the Artist as a Young Man, Ch. V, pg. 276

Line 77:

Night!

Finnegans Wake I.8, pg. 216

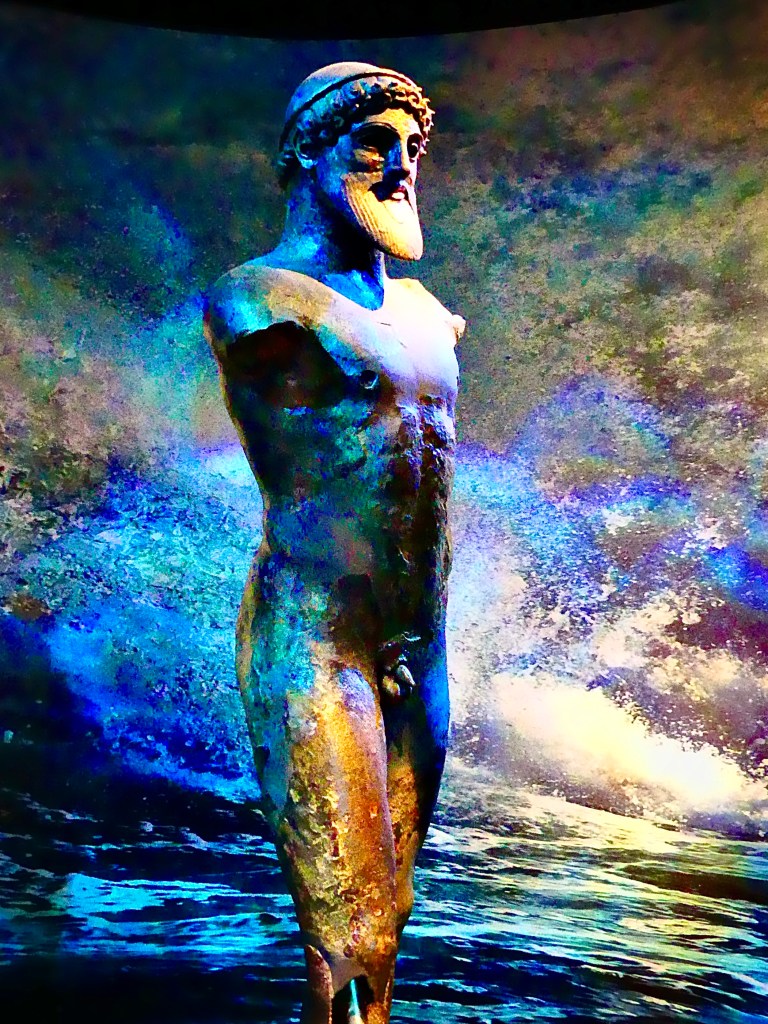

This work represents an experiment in reading, or what Pierre Bayard refers to as non-reading (hearing about, skimming, and forgetting), the closing lines of James Joyce’s Finnegans Wake. In it, I combine a multi-dimensional translation of the lines (including what I believe may be one new discovery: “A gull. Gulls.” from the Albanian agull, “dawn, daybreak” or “semi-darkness, fog”) with a version of the poetic form of the cento, or collage poem, composed largely of lines drawn from a number of Joyce’s works. I took a first stab at translating the passage in my fall 2014 undergraduate honors seminar on Joyce’s fiction and then returned to it in the fall of2020 for another honors seminar, substantially revising, nuancing, and expanding the translation, recasting it in poetic stanzas, and interspersing where I determined most appropriate some of the key lines, which we had discussed during the course of the semester, from Joyce’s other fiction. I then put together as a gift from my students a small chapbook, which included a printing in a facsimile font based on Joyce’s handwriting and the photo below I took during a summer 2017 visit to Athens, Greece. My non-reading of Finnegans Wake needs to acknowledge the indispensable aid provided by Roland McHugh’s Annotations to Finnegans Wake, Edmund Lloyd Epstein’s A Guide Through Finnegans Wake, and the annotated online Finnegans Wake. All of my non-reading of Joyce’s work owes a huge debt to my former colleague and the celebrated Joyce scholar, Brandy Kershner (1944-2020), honored in the JJLS‘s penultimate issue (34, no. 2) by his student, close friend, and another great Joyce scholar, Garry Leonard.

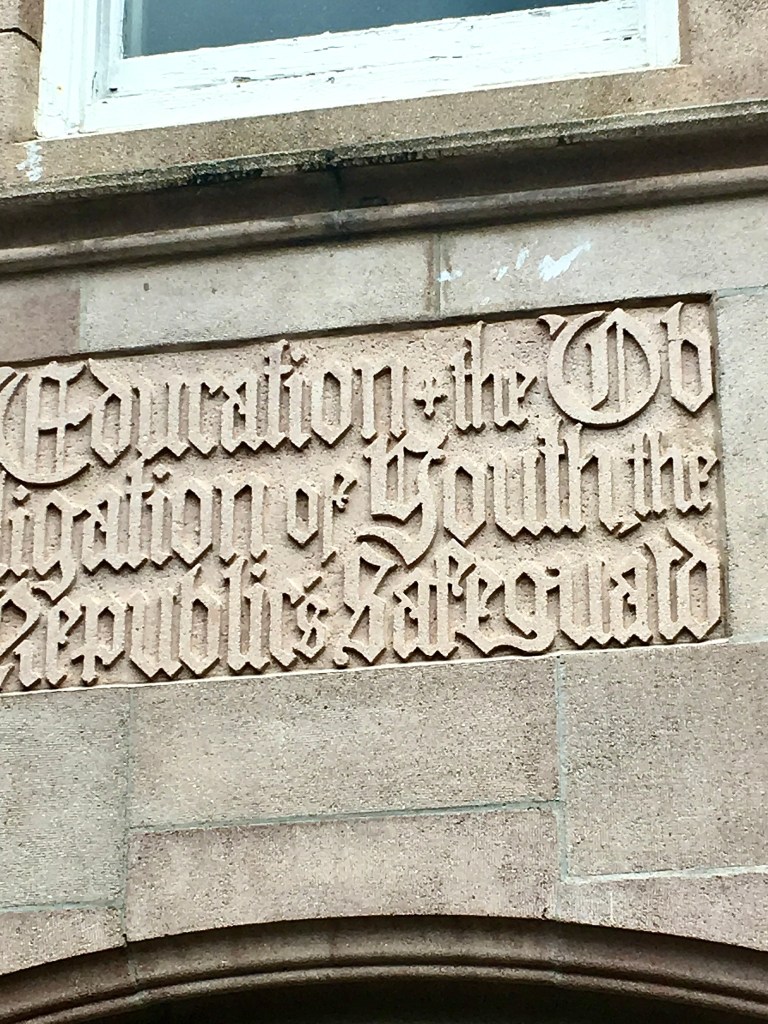

Compromise and the Fall of a University (November 8, 2021)

By 1933 the FZ [Frankfurter Zeitung] had committed itself to a policy which was governed, in [Rudolph] Kircher’s words, by “the law of the lesser evil” (26 June I932). This law continued to be applied after Hitler’s accession to office, and it ordained that compromise was preferable to confrontation, despite the blatantly anti-democratic, racist, and violent nature of National Socialism, characteristics which the FZ had never ceased to bring to its readers’ attention and to decry before I933. Attempts to appease the political right were an established practice before Hitler was appointed chancellor.

Modris Eksteins, “The Frankfurter Zeitung: Mirror of Weimar Democracy”

Ed elli a me: “Questo misero modo

tegnon l’anime triste di coloro

che visser sanza ’nfamia e sanza lodo.

(And he said to me: ‘This miserable state is borne/ by the wretched souls of those who lived/ without disgrace yet without praise.)

Dante Aligheri, Inferno, Canto III

On Saturday night, the University of Florida football team—which in the last year has twice valiantly fought back to be within a few points of defeating reigning national champion and currently number two-ranked Alabama—suffered a humiliating 17-40 loss to the University of South Carolina. This dropped the team, which in early October had a 4-1 record and was ranked in the top ten, to a dismal 4-5, including 2-5 in the Southeastern Conference and with losses to long-time rivals LSU and Georgia. The response of fans and sports analysts has been decisive and uncompromising. Calls for the replacement of Head Coach Dan Mullen as well as current UF Athletic Director Scott Stricklin, both of whom previously worked together at Mississippi State University (Stricklin was also responsible for bringing Mullen to UF) have exploded across the internet. One UF graduate on Twitter concisely summarizes the affair in this way: “Scott Stricklin is just as absent in leadership as the football head coach is.”

A similar response to the failure of UF’s academic leadership has emerged following last week’s revelations of decisions by university administrators to deny at least eight faculty members, from a variety of different disciplines and colleges, permission to testify in court or provide expert witness in cases involving the state government. The administrative argument for these denials runs as follows: “outside activities that may pose a conflict of interest to the executive branch of the state of Florida create a conflict for the University of Florida.” The university first tried to back track and claim this was only for services that involved payment, but this was followed by further revelations of other denials in cases, including those in the medical school, that did not involve any payment whatsoever. The result has been a growing chorus of voices throughout the nation and abroad condemning these actions as well as an investigation on the part of the national accrediting agency into potential violations of academic freedom standards. Thus, far more significantly than what has occurred this fall on the playing field, these actions represent a dire threat both to the core principles of the university and the reputation and ranking of the institution as a whole.

In the November 1, 2021 online edition of The Chronicle of Higher Education, University of Michigan Professor Silke-Maria Weineck describes the chilling parallels between these developments at UF and the lead-up to the Nazi seizure of control in 1933 of the state and its institutions, including the university. Weineck points out, “The most deeply troubling aspect of this episode is the explicit conflation of the interest of a state government with the interest of a state university. A public university is beholden to truth-seeking and truth-speaking, and neither can possibly be subject to direct political control. A university that bars its faculty from criticizing the government in court has abandoned its core mission and tossed what should be its most fundamental values to a foul-smelling wind.” Weineck concludes her essay on this powerful note: “Democracies go bankrupt the same way everybody else does: very slowly, then all of a sudden. We are still at ‘slowly.’ All of a sudden is scheduled for Tuesday, November 8, 2022. If Florida’s administrators have ever asked themselves how they would have acted in 1932, now they know.”

Fully uncompromising in her assessment of the actions of UF’s administrative leaders, Weineck also sheds significant light on the path that led to this morass. To many, the revelations in the past few weeks of the extent of the capitulation of the university’s leaders to the whims of the governor—and even what Weineck refers to as their vorauseilender Gehorsam: “‘obedience ahead of the command.’ The Yale historian Timothy Snyder translates it as ‘anticipatory obedience,’ and that is close enough, but it doesn’t quite capture the scurrying servility implied in ‘vorauseilen,’ to hurry ahead”—seems an “all of a sudden” shift in policy. Of course, through his media spokespeople the governor continues to deny that he in any way influenced these decisions—though DeSantis campaign “mega-donor” and the head of his transition team Mori Hosseini is currently the Chair of UF’s Board of Trustees and thus works closely with UF’s upper administrators and a raft of legislation the governor happily signed last summer, which will have a chilling effect on education in the state more generally, also indicates that he is no champion of “free speech, open inquiry and viewpoint diversity on college and university campuses.” Moreover, we should keep in mind that this “all of a sudden” also encompasses UF’s President Kent Fuchs’s sudden reversal at the beginning of the fall semester of the university’s plans to act on the recommendation of its own medical experts and have the first weeks of classes online or require masks to be worn in all campus facilities. Fuchs claims that he could not allow obvious COVID mitigation strategies such as mandatory masks or vaccines because he believes the “university does not currently have the authority” to counter the COVID-denying mandates of the governor. Then in September, Fuchs informed “the UF Faculty Senate, which was considering a statement critical of the governor’s COVID policies, that anyone who represents UF shouldn’t do anything to rupture or fracture our relationship with our state government and our elected officials.”

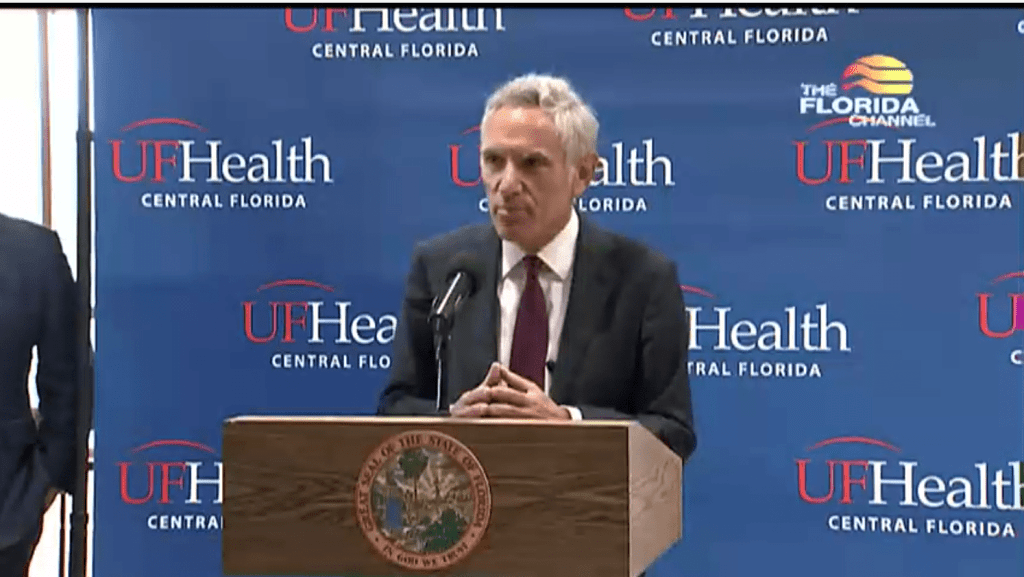

Fuchs’s decision was made even more shameful by the fact that the local K-12 school board, in the face of dire threats from the governor and state Board of Education, courageously stood by the recommendations of UF’s faculty experts and required masks in all school facilities—a policy now extended, with the exemption of those with documented medical conditions, until at least early December. A few weeks later UF’s administration along with Hosseini were again in the news for “fast tracking” the approval of a position at the university’s medical school for Dr. Joseph Ladapo, the governor’s controversial appointee to the position of state Surgeon General. Buried in the glut of other terrible news this past week was the story of Democratic State Senator Tina Polsky who received “a profanity-laced, anti-Semitic death threat” as a result of her request, because she is in treatment for cancer, for “Ladapo to leave her office because he wouldn’t wear a face covering.”

However, UF’s “bankruptcy,” just like that of its football team and the state of Florida as a whole, has been unfolding, as Weineck would have it, much more “slowly” over the course of many months and even years. Such changes are part and parcel of the “tyranny of neoliberalism” that continues to be imposed on all institutions of higher education. On the eve of the 2016 U.S. election, University of Virginia American historian Alan Taylor characterized these changes as involving the gradual elimination of those aspects of the university that aim at producing the social “public goods” necessary for democracy to flourish—including the core values of expert knowledge, academic freedom, and the full autonomy of the university and its faculty from state control—and an increasingly exclusive focus on individual “economic goods,” those that flow both to the university’s graduates and the institution itself. Taylor points out that today the reigning common sense is that “the individual student is the primary beneficiary of education and that its value is best measured in dollars subsequently earned.”

Taylor’s observations were born out in September when at a press conference—indoors and unmasked—announcing UF’s climb to a tie for fifth place in the U.S. News & World Reports most recent rankings of public universities, DeSantis crowed, “Students and families know that getting a great education for a great price, with minimal debt, and skills to prosper and adapt in a fast-changing workforce, there is no doubt the success of UF is tied to the success of our state—present and future.” As a result of this change, Taylor further points out, “it now sounds fuzzy and naïve to speak of any other benefits of higher education, such as knowledge for its own sake, increased happiness, an enhanced appreciation of art, or a deeper understanding of human nature and society. Along the way, we also have shunted into the background the collective, social rewards of education: the ways in which we all, including those who do not attend college, benefit from better writers and thinkers, technological advances, expanded markets, and lower crime rates.” This narrowing of the mission of higher education has contributed in no small part to the deeply polarized, paranoid, unstable, and increasingly violent society we inhabit today.

These changes came about slowly and in a piecemeal fashion through a combination of the undermining of real shared governance on campus and the increase in top-down dictates from the state, university governing boards, and local administrators to the university’s other “stakeholders”—the majority of its faculty, students, and less well-positioned alumni. These mandates include unprecedented interventions in curricular and classroom matters. While it is rare that these changes came with the endorsement of these other stakeholders, they were also not often enough challenged directly and in the public sphere. Instead, those lower down the university chain of command were forced to turn their attention to the task of implementing these new policies, asking themselves: how do we do these things, even if we are convinced that they are wrong, so that they result in the least amount of harm to our students and communities? The result is the establishment of precedents such that UF’s President Fuchs can now claim, “the policy ‘is very simply about participating in litigation against your employer. . . . We have a tradition here recently of not approving any employee that is an employee of the state of Florida then serving as an expert witness in litigation against the state of Florida. . . .That’s been our practice.” If any such “recent tradition” (an oxymoronic notion if there ever was one) exists, it is only because public challenges were not raised by either those whose activities were blocked or those who implemented these changes.

At the root of this acquiescence on the part of the university’s administration, and in turn its faculty and students, is what Rachel Greenwald Smith identifies in her recent book On Compromise: Art, Politics, and the Fate of an American Ideal as the essential neoliberal value of compromise. Greenwald Smith points out that such an ethos serves as way of “avoiding of ideological conflict: invocations of you do you and do your own thing, idealized notions of consensus, and simple acts of erasure— of conflict itself and of those who might provoke conflict” (6). In her book’s moving final pages, Greenwald Smith further observes,

[C]ompromise requires the belief that unsatisfactory things can be made satisfactory, at least temporarily. That the pain and loss generated by a bad situation can be managed, or made fair, or tolerable, even if the underlying conflict remains. We have been trying to give things up to make each other happy, but in doing so we have made the mistake of so many compromisers: we have pushed away the reality of those unsatisfactory conditions, when we could have confronted them in all of their intractability, in all of their dread. (195)

Greenwald Smith is not so naïve as to suggest that compromise is not necessary in specific and local contexts and decisions. Rather, problems arise with the “confusion between compromise as a means and compromise as an end” (9). From the former perspective, the only good compromise is one in which all the parties involved feel as if they have failed and thus remain committed to battling on: “When I say compromises are ugly things, I don’t mean that they are monstrous or oppositional or disturbing or challenging. I mean that they are unsatisfying, awkward, boring, haphazard. They might be the best we can get, but they do not and should not please us” (51). The value of compromise as an end leads to the excoriation of those who do resist as being inflexible (“flexibility,” as Catherine Malabou reminds us, is another of the fundamental neoliberal values), dogmatic, or, worst of all, uncollegial. This condemnation further results in the marginalization of other viewpoints in any meaningful decision-making process regarding the university’s future. Such a marginalization of the faculty in these processes has in recent years become more and more the norm. This is so much the case today that in his praise of those who participated in UF’s climb in the rankings, a climb that began long before his administration took office, DeSantis could overlook one of the most important contributors to the university’s success: “There’s a lot of great students, administrators, the Florida legislature, and board members that have continued to make Florida the best place in the nation to get a great education.” But where would the university be without its faculty?

Here is where we might extend Weineck’s parallel between our current crisis and the gradual ascendancy of the Nazis in Germany. If the ready acquiescence of the university’s administrators to the whims of current political leaders places them in a position similar to German leaders in 1932, then silence and passivity on the part of faculty and students make us the equivalent to those journalists who worked for The Frankfurter Zeitung in the years “before Hitler was appointed chancellor:” those who may have fully understood what was coming into being but who believed that “compromise was preferable to confrontation,” and in so doing, began slowly to acclimate to a terrible new reality. Only when the path forward was set did they respond, but by then it was too late. At this point, the only options that remained were making do in this new situation or flight; however, as much today as in those earlier dark times, flight, even if it were necessary, remains an option available only to a privileged few.

Thankfully as Weineck emphasizes, we have not yet reached the point of no return. There is still an opportunity to renew the best traditions of the American university and, along with it, democracy. However, these changes will not come about through such risible actions as the university’s president appointing of a “task force,” largely made up of other upper administrators, to make recommendations to him on how “UF should respond when employees request approval to serve as expert witnesses in litigation in which their employer, the state of Florida, is a party.” Even the suggestion that this is an issue to be discussed represents a fundamental threat to the autonomy of the university and its role as a servant of the public good of our state and nation rather than of their current leaders. Travel down this path and very soon the “king’s two bodies” become one again.

One of the other great historians of the modern American university, Christopher Newfield points out,

Advancing the new education can’t rest mainly on appeals to the better angels of society’s top brass. Its advancement will depend on intellectual and social movements, on political, ethical, and sociocultural justifications that address a wide range of society’s conflicted publics and seek to build political majorities, often in opposition to business elites and their politicians. One last twist: tenured faculty members will need to join this opposition even though, as descendants of the postwar professional-managerial class, they are traditionally allied with business elites and have used professional rights, like self-governance, quite sparingly. As any of our students might say to us—good luck with that.

At UF, the organization that remains most committed to these rights of self-governance, while also having the collective power to act uncompromisingly in upholding the core principles of a democratic university, are the faculty and graduate students unions, United Faculty of Florida (UFF) and Graduate Assistants United (GAU). While opponents will grumble that unions only “politicize” higher education, the events of recent months make clear where such politicization always begins.

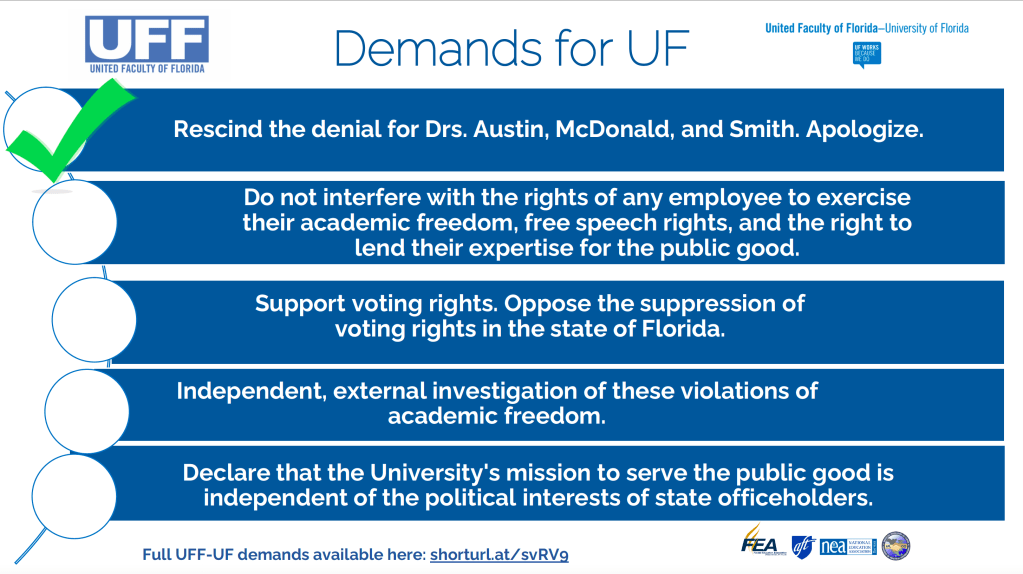

It is no coincidence that the pushback against Fuchs’s “recent tradition” began in earnest when active members of the university’s collective bargaining unit were prohibited from testifying in support of legal challenges to the state’s recently enacted voter restrictions. Moreover, it was only after a UFF press conference announcing a set of demands and possible actions that the administration agreed “to reverse the decisions on recent requests by UF employees to serve as expert witnesses in litigation in which the state of Florida is a party and to approve the requests regardless of personal compensation.”

While this is a significant first step and an encouraging demonstration of what coordinated collective action may accomplish, it is only a first step. This is the moment when a retreat into the bad habits of compromise might occur: after all, the thinking goes, shouldn’t we accept this positive turn and wait until December in the hope that the president’s task force will make the only reasonable decision? Recent actions would only suggest, good luck with that. Moreover, while the university has reversed its most recent decisions, it has so far ignored UFF’s other demands:

- The University must also issue a formal apology to faculty affected by these prohibitions for violating their academic freedom and their rights as workers.

- University administration must affirm that it will not interfere with the right of any employee to exercise their conscience, academic freedom, free speech rights, and expertise in an expert witness context, regardless of whether they receive payment for their expertise.

- UF must affirm its support for voting rights and commit to opposing ongoing efforts to suppress voting rights in the state of Florida.

- UF must formally declare that the University’s mission to serve the public good is independent of the transitory political interests of state officeholders. Instead, UF should uphold its mission statement as the prime directive for all University activities.

After President Fuchs’s Friday announcement, UFF-IUF president Paul Ortiz stressed the need to be uncompromising in our expectations: “UFF-UF is looking for a clear and unambiguous commitment to academic freedom going forward. We are also requesting an external review of UF’s practices regarding requests for approval of outside scholarly activities. This external review should mirror best academic practices of peer review and external review in higher education.” Until such steps are taken, it remains absolutely necessary to keep in place the specific actions recommended for UF donors, national education leaders, artists, scholars, and intellectuals invited to appear at UF, and all faculty. By refusing compromise on our core values, we may yet avert UF’s and Florida’s own 1933. Then, perhaps, one day in a not too distant future, we might be able to respond with pride and dignity when asked the question: how did you act in 2021?

The Fire This Time (January 29, 2021)

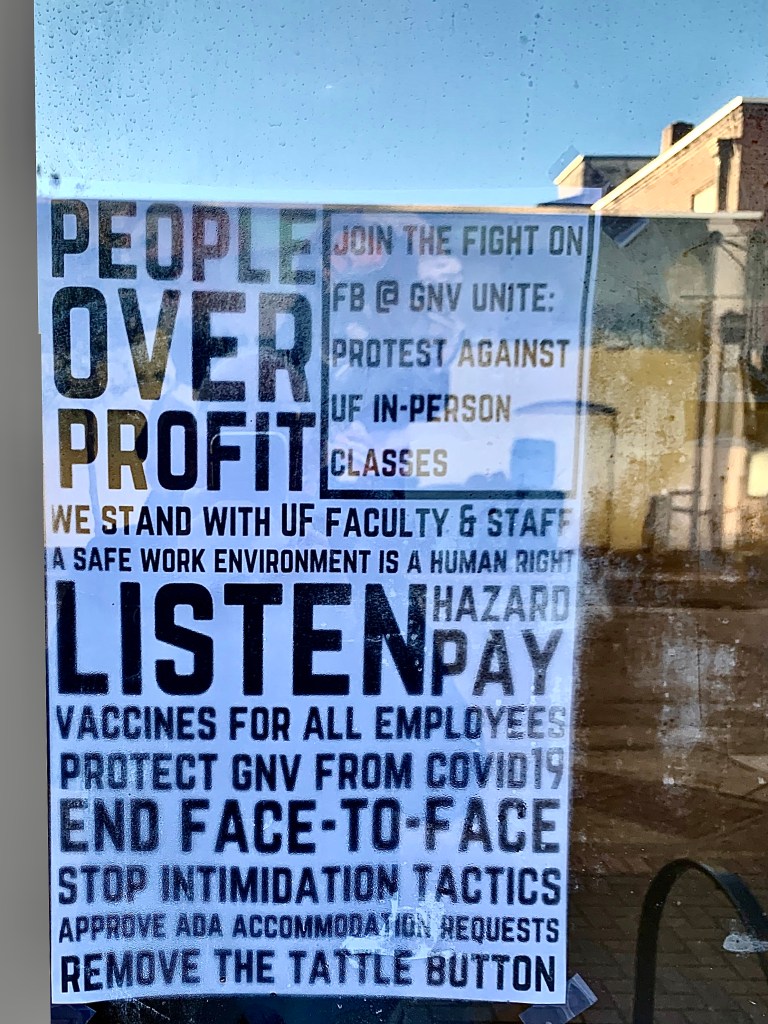

I am sure most of you reading this post are already fully aware of the dire situation at the University of Florida this spring in terms of the coerced face-to-face teaching demanded by the university’s higher administration. This has become a national scandal and there have been numerous major media reports, of which the following list represents only a sample:

Orlando Sentinel, January 26

Washington Post, January 23

Gainesville Sun, January 23

Inside Higher Ed, January 21

Chronicle of Higher Ed, January 19

EdSurge, January 14

As these stories all make clear, the response of our university to the pandemic differs from that of not only universities in other states—in the last week, the University of Michigan has suspended their sports programs and then issued a stay-at-home order for their entire campus—but also from other campus in our state system. Indeed, as the Orlando Sentinel reports, “The start of the semester has gone smoother at Florida State University, where in-person classes resumed on Jan. 19. Just over half of the school’s course sections are taught face-to-face this spring, though many of them are small, said Matthew Lata, a professor in the College of Music who serves as the president of the faculty union there. ‘So far, I’ve had almost no complaints,’ Lata said, adding his administration’s approach had been more conciliatory than UF’s. ‘It’s a much happier shop here in Tallahassee than it is in Gainesville.’”

Despite their claims to the contrary, our university is pursuing these policies in disregard of the recommendations of national and international medical experts and in the face of skyrocketing numbers of infections in Florida and in Alachua County, the county where UF is located, currently, according to The New York Times, at an “extremely high risk” level. As a result of these policies, the university is endangering the health and even the lives of many, including those of the most vulnerable populations on campus of untenured faculty, graduate students, staff, and campus support workers, as well as elderly community residents and those who have few options but to serve in the entertainment and service sectors of the local economy (and this keep in mind, as the January 23 story in the Gainesville Sun bears out, is occurring in a state with Governor DeSantis mandated 100% bar capacities and local municipalities are barred from enforcing compliance with CDC guidelines). One person who has already paid the price is the university’s basketball star, Keyontae Johnson, whose NBA career may very well be in jeopardy because of his infection and subsequent collapse in the opening moments of a game at Florida State on December 12. (This recent story underscores the fact the university continues to stonewall any information on the cause of his collapse, hiding behind “student privacy” concerns).

However, one important point that all these excellent reports tend to pass over is the fact that these decisions, policies, and actions have their roots in larger contexts, of both recent developments and transformations in higher education that have been underway for decades. A half century ago, Fredric Jameson observed that ideology is never simply a matter of content—of the ideas, beliefs, and values we hold in our heads—but rather of the very forms of thought we use to understand the world: “The dominant ideology of the Western countries is clearly that Anglo-American empirical realism for which all dialectical thinking represents a threat, and whose mission is essentially to serve as a check on social consciousness . . . The method of such thinking, in its various forms and guises, consists in separating reality into airtight compartments, carefully distinguishing the political from the economic, the legal from the political, the sociological from the historical, so that the full implications of any given problem can never come into view; and in limiting all statements to the discrete and the immediately verifiable, in order to rule out any speculative and totalizing thought which might lead to a vision of social life as a whole.” Thus, in order to more fully understand the current crisis, and even more significantly not to repeat the errors of the past, we need to make these connections explicit.

UF’s Provost Joseph Glover has stated the reason for pursuing such a plan of action is the fact that “Our [his] first commitment is to our students: to provide instruction in the format they requested, whether in person or online.” Whether this McDonald’s customer-first approach to higher education is true or not—and if so, it says a great deal about the attitudes the university currently has toward its faculty, workers, and their families—the situation is far more complex. First of all, such declarations on the part of the university administration conveniently provide ample cover for Florida’s Trump-acolyte governor and Scott Atlas-endorsed “herd immunity” advocate, Ron DeSantis. (I touched onto DeSantis’s response to the Covid crisis and its consequences for the university in a blog post last October.) Moreover, as Theodor Adorno would put it, “it is hardly an accident” that the Covid pandemic has led to the suspension of hiring in many departments and programs, already cut to the bone by more than a decade of prior cuts and scanty hiring; the Board of Trustee’s approval last fall of expansive new furlough powers on the part of the administration; and likely significant budget cuts later this spring. These changes parallel those in many other Republican-dominated states, including the recent decision in Kansas (what’s the matter with Kansas?) to use the crisis as an excuse to suspend tenure protections.

However, the University of Florida has made amply clear that the one exception to any contemplated cutbacks is its vaunted AI initiative, launched in earnest this past fall. The “intent” of the initiative, the university’s website proclaims, is to “use this initiative to create a model for AI workforce development that can serve as a template for other colleges and universities in Florida and across the U.S.” No surprise then, in the midst of the Covid emergency, the university recently sent its faculty the cheery news that a new “Data Science and Information Technology” building was underway at the center of our “historic campus,” and this after other already approved projects, such as a long-planned and desperately needed new music building, have been suspended. The building will be named after the alumnus-donor who is also the owner of the company whose proprietary technology underwrites this entire initiative. Moreover, last summer, the university announced the opening of a new luxury “boutique hotel,” one that just happened to be funded by “UF reserve funds, or money left over from previous budgets:” that is, money not spent on hires and other needed infrastructural developments.

As my colleague and our former union president Kim Emery argues in a brilliant essay from a decade ago, these current policies and actions are very much in line with a longer and ongoing program by university administrators, in conjunction with state Republicans from Jeb Bush to the present, to strategically use what Naomi Klein famously terms the “shock doctrine” and Emery calls “management by crisis:” the deployment of “events”—manufactured ones, as was the case in 2006 in the College of Liberal Arts and Sciences, wherein as Emery notes, the university first “‘uncovered” a significant fiscal deficit” and then used it to announce a “Five-Year Plan,” which included “combining some departments and reducing others” as well removing the “Department Chairs of Math and English;” and actual, as in the economic collapse in 2008 and the Covid pandemic in the present—as the means of at once of eroding faculty governance, destroying unions and other collective organizations state-wide, and reorganizing higher education in Florida. This was in turn is part and parcel of a larger neoconservative push that extends back to the early 1980s, which, as the historian Alan Taylor argues in an outstanding essay published on the eve of our unrelenting Trump “error,” worked to undermine the post-World War II project of education for democratic citizenship, or what Taylor refers to as “political goods,” and replacing it with an education system focused exclusively on “economic goods,” or individual and institutional financial rewards. (I talk about Taylor’s essay and the consequences of these changes in more detail in the first chapter of my book Invoking Hope.)

The cynical use of the Covid crisis to justify such a neoliberal remaking of the American university is laid bare in an opinion piece published last summer in Inside Higher Ed where the authors argue for the need to use “this moment to move toward needed transformation” instead of “the industry” (!!) reverting “to an old playbook, where cuts are neither strategic nor grounded in important goals of creating a sustainable business model that is aligned with the institution’s strategic vision.” Not surprisingly, their recommendations include the shift from “Budget balancing (‘Do I have enough?’) to return on investment (‘What do I get from what I have?’)” and establishing “clear lines of authority. People need to know who will make final decisions about strategic priorities and how resources will be aligned with these priorities. Good leaders will establish who the deciders are and how stakeholders [sic] can provide input.”

In years past, the university’s success in foisting these changes was in a large part based on the tried-and-true strategy of divide and conquer. In 2006, for example, the university succeeded in pitting against each other departments in the college and individual faculty members within the same departments, by appealing to individual grievances, resentments, and narrow self-interest. The same strategy worked well in a number of other situations in the decade to follow. In the current emergency, the aim is clearly to reproduce this successful strategy by setting undergraduate students against the faculty, graduate students, and university support staff.

In the current moment, such an approach happily thus far seems to be failing. No one among the targeted communities sees any benefits from the situation; undergraduates have made their displeasure clear; and a number of departments have expressed an unwillingness to play along. Moreover, as had been the case in past challenges, UF’s faculty and graduate student unions have marshalled an effective challenge to these policies. A number of other new actions have also been started. Recently, a MoveOn boycott initiative has been launched, after a viral thread exploded. The university administration remains deeply invested in institutional rankings and its “Preeminence Initiative:” what such a preeminence means to Governor DeSantis, is made evident in this 2019 story about UF’s rise to #7 in The U.S. News and World Report’s ranking of public universities, complete with a photo-op of him receiving a football jersey from thankful administrators. Making them aware that their current actions will do long-term damage to the university’s reputation and may even lower UF’s rankings will surely have an impact, and international letter writing campaigns are underway. (The email addresses of the president and provost are readily available on the university’s website.)

All of this makes evident a key lesson of this challenging moment. Whatever the outcome of the current crisis, it is vitally important that all of us in the university community—as well as in university communities across the nation and our planet—understand that the what has happened the last few months are not isolated occurrences, ill-conceived and hasty reactions to an unexpected series of events, but rather an opportunistic use of one more in what now seem to be an endless series of crises. As such, the next crisis will produce a similar response and the only possible hope we have lies in a shared, collective refusal of such a deeply anti-democratic vision of the university and higher education.

Reading James Joyce and Watching the Gators in 2020 (October 19, 2020)

How long is Haines going to stay in this tower?

James Joyce, Ulysses

There is a scene early in James Joyce’s A Portrait of the Artist as a Young Man (1916) that resonates in perhaps unexpected ways with the contemporary nightmare from which we are trying to awake. The scene concerns the early schooling of Joyce’s semi-autobiographical would-be artist Stephen Dedalus, in the moment of his short-lived attendance at the elite Irish Catholic boarding school, Clongowes Woods College in County Kildare. (Joyce, born in 1882, attended the school from ages 6-9 but was forced to leave due to the rapidly declining fortunes of his once middle-class Irish Catholic family.) Joyce writes:

It was the hour for sums. Father Arnall wrote a hard sum on the board and then said:

—Now then, who will win? Go ahead, York! Go ahead, Lancaster!

Stephen tried his best but the sum was too hard and he felt confused. The little silk badge with the white rose on it that was pinned on the breast of his jacket began to flutter. He was no good at sums but he tried his best so that York might not lose. Father Arnall’s face looked very black but he was not in a wax: he was laughing. Then Jack Lawton cracked his fingers and Father Arnall looked at his copybook and said:

—Right. Bravo Lancaster! The red rose wins. Come on now, York! Forge ahead!

This leads Stephen to meditate on the color of roses:

White roses and red roses: those were beautiful colours to think of. And the cards for first place and third place were beautiful colours too: pink and cream and lavender. Lavender and cream and pink roses were beautiful to think of. Perhaps a wild rose might be like those colours and he remembered the song about the wild rose blossoms on the little green place. But you could not have a green rose. But perhaps somewhere in the world you could.

The two parties Father Arnall refers to here, the Yorks and the Lancasters, were competing factions of the fifteen-century English Plantagenet royal house who engaged in a 32-year-long conflict that would come to be known as the Wars of the Roses, after the white and red rose heraldic emblems of the two sides. As in Stephen’s classroom “game,” the Lancasters proved victorious. Their leader, Henry Tudor, assumed the English throne as Henry VII and established a dynasty that would rule the country for more than a century. During the Wars, Ireland sided with the Yorks and as a result Henry VII and even more so his two successors, Henry VIII and Elizabeth I, would increasingly consolidate and formalize English imperial rule over Ireland, which would continue until 1922.

Although neither young Stephen nor the reader yet realize it, this short scene brilliantly encapsulates two dimensions of what it means to live in an occupied land. First, Joyce shows the ways that even small gestures such as the choice of names for a children’s game remind the Irish people of both their past defeats and the impossibility of anything like the autonomous Irish history figured by the green rose of which the young Stephen dreams. Secondly, Joyce subtly demonstrates the myriad ways that in any situation of occupation, all aspects of everyday life are politicized. This becomes more and more evident as the novel progresses: in the next section of the first chapter, the family’s Christmas dinner explodes into passionate argument over the sad fate of the recently deceased Irish leader, Charles Stewart Parnell; and the chapter comes to its climax in a Irish landscape whose smells, sights, and sounds—the fields surrounding the elite Anglo-Irish Barton family’s Straffan House and “the sound of the cricket bats: pick, pack, pock, puck: like drops of water in a fountain falling softly in the brimming bowl”—all “mythologize,” as Roland Barthes puts it, the British imperial presence. The “very principle of myth,” Barthes points out, is to “transform history into nature,” in this case making British rule appear to its subjects as an immutable fact of the world, a reign upon which the sun will never set.

Even in the novel’s final chapter, the few careers available to Stephen’s classmates at Dublin’s Jesuit-administered University College —“Did you hear the results of the exams? He asked. Griffin was plucked, Halpin and O’Flynn are through the home civil. Moonan got fifth place in the Indian. O’Shaughnessy got fourteenth”—involve participating in British imperial rule both at home and abroad. It is this situation that Stephen finds intolerable—“My ancestors threw off their language and took another, Stephen said. They allowed a handful of foreigners to subject them. Do you fancy I am going to pay in my own life and person debts they made?”—and in the end he decides to leave Ireland to develop his art (although as we learn in the novel’s “sequel” Ulysses (1922), Stephen’s initial self-imposed exile proves to be short-lived).

I have taught Joyce’s A Portrait numerous times over the course of the last 26 years and every time I do so I learn new things from this magnificent novel. What struck me especially forcefully this fall is how much the situation of late-nineteenth and early-twentieth century Ireland resembles that of the United States in 2020. Although we are not ruled by another nation—or at least, not explicitly— in both situations nearly every aspect of daily life has been politicized to an extraordinary degree.

Even in the most equitable of democratic societies, all our actions are ultimately political in that they involve the reproduction of or challenge to a certain way of life. However, in a situation of occupation and direct domination, all the mediations, nuances, and complexities dissolve away, so that every act, every gesture—the games we play, the entertainments we enjoy, the careers we choose—becomes understood as immediately involved in supporting or challenging the ruling powers. And there are only two choices available. These leaders endlessly remind the ruled: you are either with us or you are our enemy.

Those who deny this reality and pretend there still exists some distance between seemingly inconsequential everyday activities and political life often prove themselves to be among the most complicit. This is very much the case for Joyce’s earlier great character, from his story “The Dead,” Gabriel Conroy (who is also something of a glimpse of an alternate future for Joyce himself had he decided to forgo his vocation as a writer and remained in Ireland). After Gabriel is confronted for producing a literary column for a conservative British-supporting newspaper, he responds in this way: “He continued blinking his eyes and trying to smile and murmured lamely that he saw nothing political in writing reviews of books.”

Such a politicization of everyday life has become more and more the case in the four years of the undemocratic and increasingly explicit authoritarian regime of Donald Trump. Numerous commentators have pointed out the ways that Trump, along with his supporters on the national and state levels, castigates everyone from career government officials to research scientists—from those in the state department to agriculture, from climate scientists to epidemiologists—as always already politically partisan. The result is that any statement they make or position they take is understood as either supporting or, as is far more often the case, challenging Trump’s rule. Others note that even gestures such as holiday greetings or displays of the nation’s flag have been transformed into statements of support for the Trump regime. And of course, over the past eight months we have all witnessed how practices recommended by the overwhelming majority of the medical community, such as mask wearing and adequate social distancing, similarly have been transformed into political statements. In such an untenable situation, today as much as in Joyce’s Ireland, the politicization of everyday life both increases stress and anxiety and raises the likelihood of violent clashes. (An even more explicit, quantitatively but not qualitatively different, example of the politicization of everyday life is on display in Glenway Wescott’s superb novel set in Nazi-occupied Greece, Apartment in Athens [1945].)

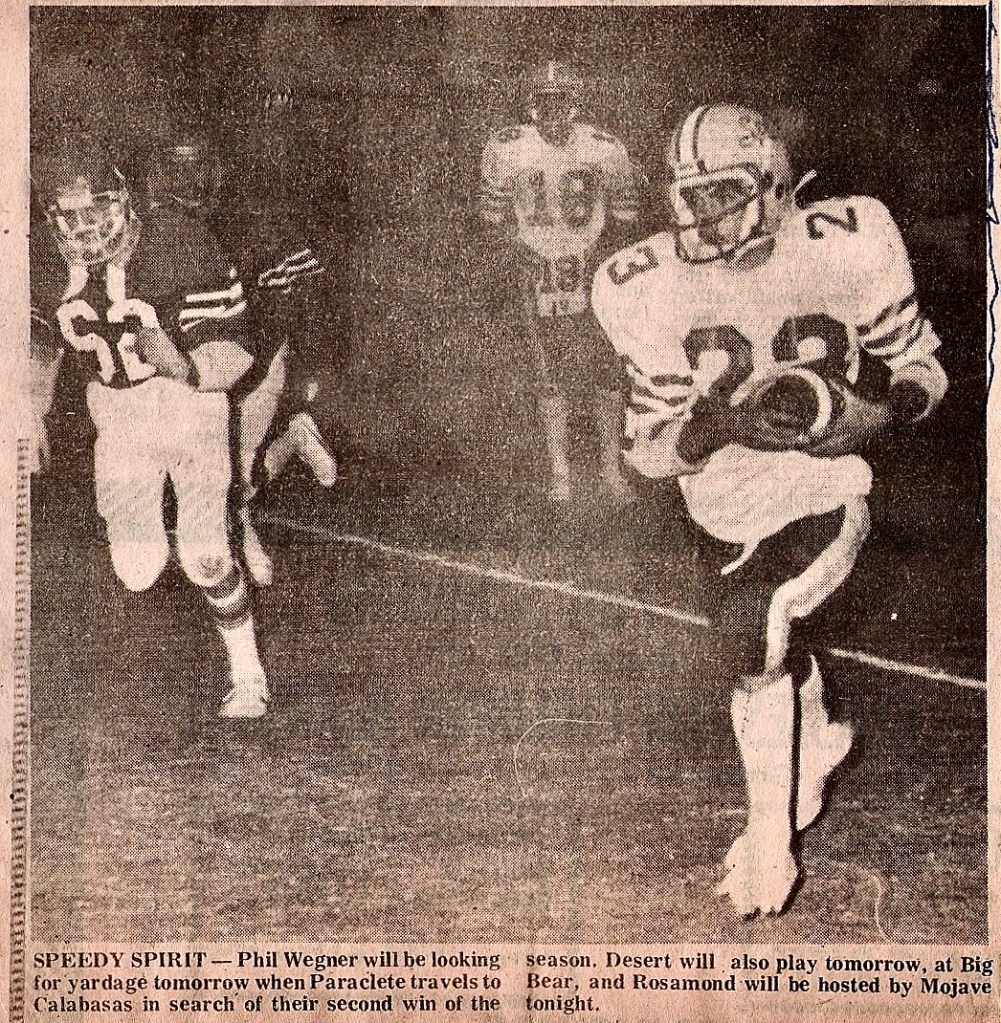

This fall, this has also become true in the case of one of my favorite pastimes, NCAA football (or “hand-egg” as my soccer playing son calls it). While I have been since my teenage years a dedicated follower of the game and was even a decent football player at my small California parochial high school, the University of Florida is the first institution I have been involved with that houses a major football program. Cal State Northridge was a Division II program while I was an undergraduate there, and while Duke was fun to watch during my first three years in graduate school—when the head coach was the Florida Gator’s former quarterback and first Heisman Trophy winner, Steve Spurrier—football always remained a very distant second to men’s basketball. (Duke played in the Final Four each of the five years I resided in Durham and won National Championships in my final two years there).

I arrived in Gainesville in the fall of 1994, four years after Spurrier left Duke to assume head coaching duties at his alma mater and launch what was until that time the most successful run in Florida football history, culminating in a victory over state rival Florida State in the 1996 National Championship game. This was the first of what would turn out to be three national championships in my years here (the Gators would win the National Championship again in 2006 and 2008 under Coach Urban Myer). I quickly became a passionate follower of the team, something that spread to my brothers residing in the Midwest and California: they would come down to Florida for big games and would even participate in UF alumni gatherings in their home towns.

I have always recognized the contradictions involved in being a fan of big-time college football. The transformation of intercollegiate athletics into an extraordinarily profitable big business enterprise has been central to the neoliberal restructuring of our nation’s once great public educational institutions—whose mission in the years following the Second World War, as the historian Alan Taylor observes, was to produce both economic goods and a capable democratic citizenry—into privatized entertainment and patent-generating research complexes, which are reliant on the exploitation of the cheap labor of student-athletes, graduate students, support staff, and a faculty increasingly made up of what Herb Childress terms “the adjunct underclass.”

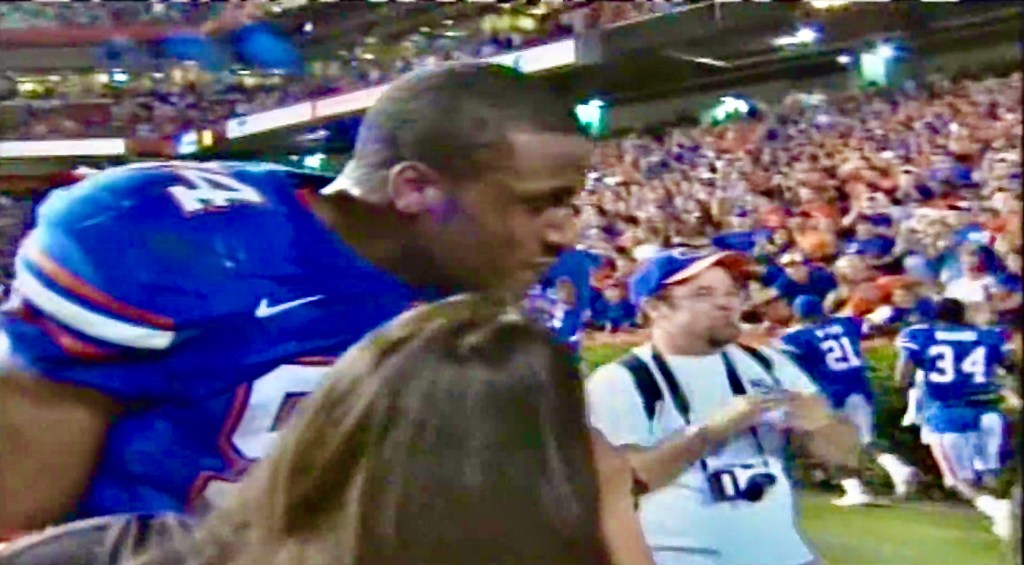

This contradiction came home to me in the 2006 national championship season. That fall, I had the extraordinary opportunity to work as part of a sideline camera crew for home games. This gave me the chance to take on-the-field photos of the action (a handful of which I have posted here) and I even momentarily appear on camera during CBS’s post-game interview with defense end Jarvis Moss after he had blocked the game-winning field goal attempt by an upstart South Carolina team then being coached by Spurrier. Moss’s heroic efforts propelled the team to subsequent victories in the Southeastern Conference (SEC) Championship and ultimately the National Championship games.

However, my exuberance over the football team’s success that season was dampened by the fact that in the same fall semester our university’s upper administration used the pretext of an economic emergency to launch a devastating assault on the humanities at the University of Florida, beginning a series of cuts and restructurings that in the subsequent decade would half the number of faculty members in our department and greatly reduce graduate student admissions.

Nevertheless, in 2006 the links between the fortunes of the football program, both on the field and off, and the political interests behind the restructuring of higher education in our state and nation were indirect and not evident to most people. (For a powerful study of these changes, I recommend this essay by my colleague Kim Emery. In another early sign of the changing realities, UF’s President at that time, Bernie Machen, would in early 2008 take the “unusual” step of endorsing Senator John McCain in his unsuccessful bid for the presidency.) Moreover, there have been and continue to be real rewards for my fandom, as my support of the Gators has enabled me to establish deep and long-lasting friendships with some extraordinary people in our community that I otherwise may have never met.

During the 2008 presidential elections and shortly before UF played Alabama for the SEC Championship, my then five-year-old son asked me, “Why don’t we hate Alabama fans like we hate Republicans?” His question took me aback and I replied that we didn’t hate either group—indeed, even some members of his extended family supported Republican candidates. But I also pointed out that I thought sports and political partisanship differed substantially, in that the former was for fun and should be forgotten as soon as the game was over, whereas the latter has significant and long-lasting consequences for the lives of many people.

In 2020, thanks to the Trump administration and its disastrous response among so many other failures to the coronavirus pandemic, this has changed. Early in the summer, when the major college football conferences were weighing whether or not to hold the season, the Trump administration along with other political leaders and Trump-supporting governors, especially in southern states like Florida, put pressure on universities to continue on despite the recommendations of medical experts and the significant potential risks involved to players, fans, and the communities involved. These political leaders felt that big-time college sports were popular among their supporters and that by demanding that things go on as usual, they could distract from the realities of the pandemic, help turn around the economic collapse their failed efforts had produced, and thereby increase their chances of remaining in power.

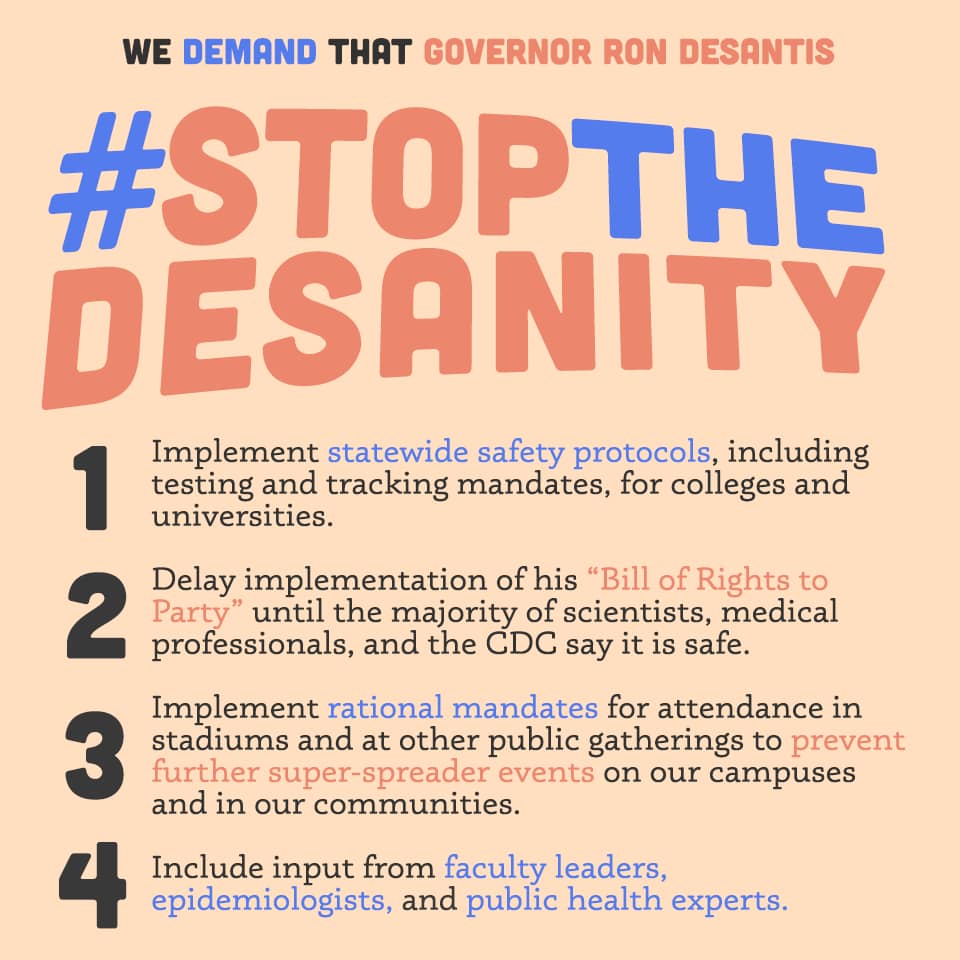

A recent study by the Brookings Institute concludes that “Partisan affiliation is often the strongest single predictor of behavior and attitudes about COVID-19, even more powerful than local infection rates or demographic characteristics, such as age and health status.” The same is now the case for college football, as support for playing the game as usual has become a sign of support for Trump. Florida’s current governor Ron DeSantis is in his own words a “Pitbull Trump Defender,” and even ran a commercial during his 2018 campaign showing him prompting his young son to build Trump’s wall and glory in Trump’s reality TV slogan, “You’re fired!” (Ironically, last Friday, Trump told a crowd of his Florida supporters that if DeSantis fails to deliver the state to him in the upcoming election, “I’ll fire him somehow. I’m going to fire him. I will find a way.”) In the days leading up to the Gators’ season opener, DeSantis thus not unexpectedly began to rail against what he termed the state universities’ “draconian” public health policies and threatened to force through a student bill of rights “that would preclude state universities from taking actions against students who are enjoying themselves.” He followed up on this threat of a “Bill of Rights to Party” by issuing an executive order allowing bars and restaurants to open up at 100% capacity and limiting local municipalities ability to do anything to curb them. Furthermore, in an act of “executive grace,” he suspended all outstanding fines and penalties that had been applied against individuals who had violated pandemic-related mandates such as mask and social distancing requirements. DeSantis opined, “I think we need to get away from trying to penalize people for social distancing. All these fines we’re going to hold in abeyance and hope that we can move forward in a way that’s more collaborative.”

The consequences of these decisions were amply on display during UF’s October 3 home opener. Although there was enforcement of social distancing and mask-wearing rules on UF’s campus and in its stadium, in the neighborhoods surrounding the campus it was pretty much business as usual, with street-side tailgating, open-container drinking (something not even allowed in normal seasons), and inadequately socially distanced game-watching parties. There were reports of packed local bars, even though the kick-off was at noon. There was also a rash of thefts of yard signs supporting Joe Biden’s campaign. When I contacted local authorities to report violations in our own neighborhood—including an adult tailgater urinating on a neighbors’ fence—the official with whom I spoke said that while they were deeply sympathetic and supportive, the governor had effectively tied their hands when it came to efforts to protect our community.

The following week, DeSantis upped the ante by declaring that all sports stadiums statewide had the right to operate at full capacity. The consequences of this new directive came to a head in the aftermath of the Gators’ October 10 upset loss at Texas A&M. Following the game, head coach Dan Mullen declared that he hoped that for the next game, “the UF administration decides to let us pack the Swamp against LSU — 100%.” He went on, “The governor has passed a rule that we’re allowed to pack the Swamp and have 90,000 in the Swamp to give us the home-field advantage Texas A&M had today.” The Swamp is the nickname established by Spurrier in the early 1990s for Florida’s Ben Hill Griffin Stadium, the twelfth largest college football stadium by capacity in the nation and the eighteenth largest in the world. When it is full, as I can personally attest from my on-field game experiences, it is also one of the loudest, a definite advantage for the home team.